Keeping you on Track

Remote Work Surveillance Software, Productivity Metrics, and Platform Managerialism

By Ali S. Qadeer and Edward Millar

Volume 24, Number 2, Don’t Be Evil

“Are you a high-priced man, or not?”

In his 1911 book Principles of Scientific Management, Frederic Winslow Taylor recounts the story of “Schmidt,” one of his victories while employed by the Bethlehem Steel Company to help streamline their operations. By Taylor’s calculations, a “first class pig iron handler” should have been capable of shovelling 47 pounds per day, but his workers were averaging at a paltry 12.5 pounds. Taylor’s task was to dramatically increase the pace of work without triggering a strike. For his experiment, he pinpoints a migrant worker he describes as physically fit, but “of the mentally sluggish type.”1 By Taylor’s account, his mark was easy to manipulate. “Schmidt” was singled out, pulled aside, and asked a simple question: “Are you a high-priced man?” If the worker could follow Taylor’s exact instructions without questioning or complaining, he would be eligible for a modest wage increase. Intrigued, “Schmidt” obliged, and increased his daily output to the magic number. In short order, the others followed suit.

The “Schmidt” tale is part of the origin story of Taylor’s theory of scientific management, a philosophy of work based on the rationalization of the labor process; it envisions how metrics and measurement can be wielded to persuade workers to internalize a capitalist logic of efficiency. The labor process theorist Harry Braverman argues that rather than providing a neutral “science of work,” the “Taylorist” approach was more accurately described as “the science of the management of other’s work under capitalist conditions.”2 Acolytes of this philosophy modified early media technologies to assist in their scrutiny of workers, using photography and film for time-and-motion studies that helped to determine the “optimal” amount of time it should take to complete a task, measured by fragments of seconds.

Workers in the spheres of production and circulation have always borne the brunt of technologies that automate, deskill, and surveil the labor process. As these technologies become more precise and sophisticated, they open up new avenues for monitoring workers. Today, Amazon warehouse “associates” don wristbands which provide haptic feedback that notifies them when their hands stray too far from an item.3 Kate Crawford, co-founder of the AI Now Institute at New York University, notes that McDonalds’ cooks have their tasks broken down into increments measured in seconds by machines, where even “the slightest delay (a customer taking too long to order, a coffee machine failing, an employee calling in sick) can result in a cascading ripple of delays, warning sounds, and management notifications.”4

Researchers Nick Dyer-Witheford, Atle Mikkola Kjosen, and James Steinhoff argue that ongoing advancements in artificial intelligence and machine learning systems are likely to be applied to a diverse range of work environments, where they will intensify the overlapping processes of panoptics, precarity, and polarization between “high end” and “low end” work.5 One outcome of this is the proletarianization of new categories of work and the downgrading of jobs that were once considered white collar: “hollowed out ‘middle level routine’ jobs in favor of either high-paying cognitive labour or low-paying manual work, albeit with downward pressures on both.”6 Larger numbers of workers are thrown into low-paying jobs, while smaller numbers are elevated to higher paid areas of employment, and a feature of this emerging polarity becomes what the sociologist Judy Wajcman calls “temporal sovereignty,” the power to determine for oneself how work time should be spent.7 Efforts by management to surveil, record, and regiment work have been hardcoded into software, which help regulate workplaces and work paces, sending automated reports to keep overseers alert to those who fall behind. As more work has shifted to remote workplace environments, many tasks and roles of managers are becoming incorporated into software and platforms, creating new opportunities for the surveillance of workers.

Tools to Manage the Remote Workplace

With the recent large-scale shift to remote work since the onset of the COVID-19 pandemic, digital workplace managerial tools have proliferated at a geometric rate. Software such as Activtrak, Hubstaff, and Teramind all boasted a tripling of demand in the early months of the pandemic.8 As the scale and scope of remote work increases, the essence of managing “work from home” remains rooted in the principles of scientific management: tracking mouse movements, recording workers’ screens, and surmising attention and time on task.

The interfaces of managerial software rely on varying degrees of transparency and obscurity. On one hand, platforms such as Hubstaff and Time Doctor follow the same design language whether they are viewed by management or by workers. These interfaces are presented as user-friendly and deploy UI patterns channeled from normative interaction design languages: bright toggles and switches, graphs, loading panes, and friendly illustrations. They use the language of “team members” and “potential” when describing the value that they add to the workplace ecosystem.

Hubstaff is marketed as an expanded project management tool. Workers receive task directives, log work hours, and interact with management all through the same platform. In essence, Hubstaff operates as middleware between employees and managers. To its worker end users, Hubstaff automates onerous tasks like timesheets while providing a simple and clear interface for self-reporting. The transparent design features imply some level of “opt-in” consent, where workers are encouraged to manage their own productivity and efficiency. However, Hubstaff’s managerial interface also comes equipped with an expanded toolset that includes location tracking, productivity metrics, and even periodic screen-capturing.

[Taylorism] envisions how metrics and measurement can be wielded to persuade workers to internalize a capitalist logic of efficiency.

On the other hand, a different type of workplace management software operates much more like conventional spyware, with interfaces that are largely invisible to workers yet which makes their own labor highly visible, recording their every interaction and engagement. These more insidious forms of workplace surveillance eschew friendly collaborative language in favor of advertising themselves as guards against “insider threats” and “time theft.” One example, Teramind, algorithmically tracks workers’ digital behavior for “deviations” and “risk” and even provides customizable “policy tools” to monitor for any behavior that management deems worthy of surveillance.9

Teramind offers two versions of its client-side software, one that is partially transparent and one that is completely obfuscated from its worker end users’ view. Both instances are designed to track every aspect of worker behavior that can be captured via the computer, including: screen recordings, audio capture, application usage, websites visited, emails, file transfers, printed documents, keystrokes, instant messaging, social media, network usage, and offline recording.10 Furthermore, the software deploys a machine learning-driven analytics engine for monitoring worker communications around any set of policies which provide “automated alerts on detection of anomaly or deviation from the normal baseline.”11 In the promotional material for Teramind, the company demonstrates how managers can set specific, term-based alerts based on worker interactions. Monitoring for workplace unionization, harassment complaints, and worker safety concerns becomes infinitely easier when all communication between workers can be intercepted, processed, sorted, and turned into fodder for managerial intelligence.

Black-Boxing and Black-Balling

Workplace surveillance software provides a way to circumvent the already insufficient laws protecting worker privacy, by moving towards “opt-in” monitoring schemes governed by illegible Terms Of Service contracts. This arrangement makes a mockery of any meaningful definition of consent, especially when introduced into the coercive context of employer/employee power dynamics. Even more problematic is the ability for some of these stealth systems to be activated by employers at a whim, without their employee’s knowledge, rendering their lives subject to tracking and scrutiny even when they are off the clock.12

The data-hungry landscape of “platform capitalism” has opened further opportunities for firms to bring different categories of labor under the aegis of “limitless worker surveillance.”13 Workforce management systems co-exist alongside incentives for employees to manage their own time through the use of productivity apps, opening up the possibility for 24/7 monitoring of worker efficiency.14 As author Melissa Gregg points out, apps like RescueTime and Vitamin R can be joined with project management software like Omnifocus, creating an integrated system that can “allow users to track work / life cycles by day, hour, or minute so that energy levels can be identified for improvement or greater discipline.”15 The effect of this, she argues, is the intensification of temporal regimes imposed on workers, ordering work practices while obscuring “the politics of labor and its delegation in the quest to maximize efficiency.”16

Together, workplace management software, worker surveillance tools, and productivity apps, constitute what law professor Ifeoma Ajunwa calls the “black box at work,” where the most intimate aspects of a worker’s life can be treated as fodder for data reputation scorecards that are then subject to the will and whims of management.17 At the same time, the inner workings of these inscrutable data-collection tools are hidden from the workers whose lives are impacted by them in ways that are, by design, difficult to make sense of.18 When the data collected by these platforms are scaled up, Ajunwa argues that there is a risk of “algorithmic black-balling,” where a reputation score generated by an opaque and legally-dubious algorithmic classification system becomes a stain that can follow a worker around through their future job searches and workplace experiences.19 Although companies may promise to refrain from sharing or selling the data they collect, murky privacy practices leave open the possibility for a “mission creep” regarding the collection and leakage of employee performance data.20 If this data can be made accessible to candidate recruitment algorithms and automated hiring software, there is a potential for “data-laundering” where the presumption of algorithmic impartiality is used as a screen to conceal bias and discrimination.21

Inasmuch as capitalists have an imperative to extract as much surplus value as they can from workers throughout the working day, all capitalism by nature is, and has always been, “surveillance capitalism.”

Mechanisms for determining the efficiency of workers and algorithmically sorting job candidates are not neutral. The consequences of workplace surveillance systems for workers must be contextualized in relation to the larger body of emerging work about the discriminatory nature of platform design, which reifies racialized and gendered inequities into a “new Jim code,” subjecting already marginalized people to the machinations of cryptic socio-technical systems that produce “digital redlining” outcomes.22 Ajunwa argues that the “black-boxed” nature of automated hiring platforms whose inner workings are protected by intellectual property laws can mean that proxies are used in place of protected categories like race and gender, resulting in a system where applicants can be automatically culled without retaining any record about who has been rejected or on what grounds. Without any legal oversight over the portability of worker data from one platform or system to another, there is a chance of “repeated employment discrimination, thus raising the specter of an algorithmically permanently excluded class of job applicants.”23

Fully Automated Luxury Taylorism

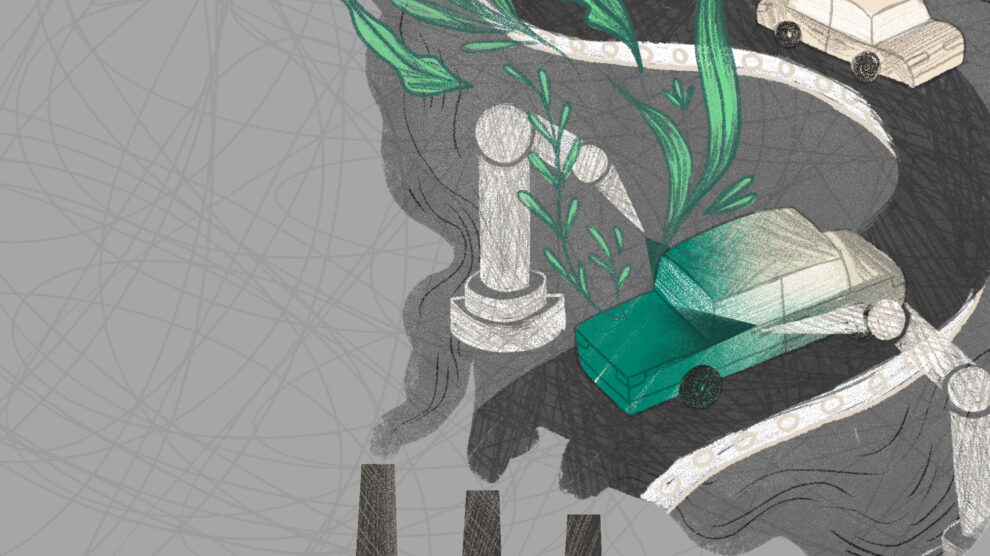

At first glance, the post-pandemic landscape of remote work management software may appear to be an outcome of what Shoshana Zuboff labels “surveillance capitalism,” an apparently seismic political-economic shift where personal data is treated as a new resource to be extracted and even the most abstract human relations are up for commodification. However, by situating these apps and platforms in relation to the work of 20th century labor process studies, we can recognize that worker surveillance systems are as indebted to old-school, industrial capitalism as they are to bleeding edge gadgetry. Inasmuch as capitalists have an imperative to extract as much surplus value as they can from workers throughout the working day, all capitalism by nature is, and has always been, “surveillance capitalism.” These tools and techniques should not be understood as the result of a broader social shift towards some general “surveillance society,” but rather the predictable results of the management and organization of work under capitalist production.

The perennial question that socialists and communists have wrestled with is under which conditions automation tools might be able to help workers, rather than squeeze more value out of them. Although Lenin was critical of how scientific management methods were deployed as instruments of capital to intensify the exploitation of workers, he also suggested that methods for rationalizing the labor process could strengthen the power of worker committees, reduce work time, and make workers better off.24 Alexei Gastev, the director of Moscow’s Central Institute of Labour, advocated for a reconciliation of Taylorism and Marxism, demanding a detailed study of workers in the service of optimizing labor discipline and workplace uniformity.25 More recently, there have been calls from left-wing accelerationists to automate more and automate faster, alongside semi-ironic memes campaigning for “fully automated luxury space communism.” However well-intentioned, such faith in the emancipatory potential of automation technology risks sounding like a funhouse mirror fantasy of the techno-utopianism of Californian ideologues and scientific managers.26

Is it possible to build more liberatory managerial software? Some positive signs include the work done by the Platform Cooperativism Consortium, a network of developers, activists, and workers building tools to facilitate worker-based co-operative ownership and management of digital service platforms. Scholar-activist Trebor Scholz argues that the platform co-op movement is “about cloning the technological heart of Uber, Task Rabbit, AirBnB, or Upwork,” in a way that “embraces the technology but wants to put it to work with a different ownership model, adhering to democratic values so as to crack the broken system of the sharing economy/on-demand economy that only benefits the few.”27 The goal of the platform cooperativism model is to leverage managerial software to make managers redundant, rather than increase their power over workers. This model proposes similar software to automate task management within the service design field, but without the pressure to extract more value out of workers. When workers co-own the platforms, they can have full control over how their data is collected and used, allowing them to improve workplace efficiency in ways that do not increase the intensity of work or make it more strenuous.28

Platform cooperatives point us towards a future where workplace management software might be deployed in a post-capitalist context. When worker-owners are involved in designing and controlling software, they are able to leverage its capabilities to improve their own lives. At the same time, it is clear that attempts to improve fairness and worker autonomy must not come from above or rely on developing technological solutions, but instead occur alongside large-scale systemic changes. Building emancipatory workplace tech is not about tinkering around the edges of platform design, but rather, to borrow from Marx, creating a future where workers are free to “hunt in the morning, fish in the afternoon, rear cattle in the evening, and criticize after dinner,” without a push notification informing them that they have exceeded by 11 percent their daily allotment of time devoted to criticism.29

If you liked this article, please consider subscribing or purchasing print or digital versions of our magazine. You can also support us by becoming a Patreon donor.

Notes

- Fredrick Winslow Taylor, The Principles of Scientific Management (New York: Harper and Brothers, 1911).

- Harvey Braverman, Labor and Monopoly Capital (New York: Monthly Review Press, 1998), 62.

- Richard Salame, “The New Taylorism,” Jacobin, February 20, 2018, https://www.jacobinmag.com/2018/02/amazon-wristband-surveillance-scientific-management.

- Kate Crawford, Atlas of AI (New Haven: Yale University Press, 2021), 74–75.

- Nick Dyer-Witheford, Atle Mikkola Kjosen, and James Steinhoff, Inhuman Power: Artificial Intelligence and the Future of Capitalism (London: Pluto Press, 2019), 87–97.

- Witheford et al., Inhuman, 94.

- Melissa Gregg, Counterproductive: Time Management in the Knowledge Economy (Durham: Duke University Press, 2018), 7.

- Polly Mosendz and Anders Melin, “Bosses Panic-Buy Spy Software to Keep Tabs on Remote Workers,” Bloomberg, March 27, 2020, https://www.bloomberg.com/news/features/2020-03-27/bosses-panic-buy-spy-software-to-keep-tabs-on-remote-workers.

- “Teramind: User Activity Monitoring & DLP, Teramind in 10 minutes: Know your insiders!” October 10, 2018, YouTube video, 10:02, https://www.youtube.com/watch?v=Gc021ChUXUo. Retrieved May 6, 2021.

- Teramind: User Activity Monitoring & DLP.

- “Teramind,” About, Teramind, Accessed May 20, 2021. https://www.teramind.co/features/ueba-user-and-entity-behavior-analytics.

- Phoebe Moore, “Precarity 4.0: A political economy of new materialism and the quantified worker” in The Quantified Self in Precarity: Work, Technology, and What Counts (Routledge, 2018), 79–139.

- Nick Srnicek, Platform Capitalism (London: Polity Press, 2016); Ifeoma Ajunwa, Kate Crawford, and Jason Schultz, “Limitless Worker Surveillance,” California Law Review, 105, no. 3 (June 2017): 769.

- Phoebe Moore and Lukasz Piwek, “Regulating Wellbeing in the Brave New Quantified Workplace,” Employee Relations, 39, no. 3 (October 2016): 308–316.

- Gregg, Counterproductive, 91.

- Gregg, Counterproductive, 95.

- Ifeoma Ajunwa, “The ‘Black Box’ at Work,” Big Data & Society, 7, no. 2 (July 2020): 1–6.

- Phoebe Moore and Simon Joyce, “Black Box or Hidden Abode: The Expansion and Exposure of Platform Work Managerialism,” Review of Political Economy 27, no. 4 (June 2019): 926–948.

- Ajunwa, “Black Box,” 4.

- Ajunwa, “Black Box,” 3.

- Ajunwa, “Black Box,” 3.

- Ruha Benjamin, Race After Technology: Abolitionist Tools for the New Jim Code (London: Polity Press, 2019).

- Ifeoma Ajunwa, “The Auditing Imperative for Automated Hiring,” Harvard Journal of Law & Technology 34, no. 2 (2021): 10.

- Vladimir Ilyich Lenin, “The Taylor System: Man’s Enslavement by the Machine,” Put Pravdy, 35 no. 20 (March 1914): 152-154 https://www.marxists.org/archive/lenin/works/1914/mar/13.htm.

- Gavin Mueller, Breaking Things at Work: The Luddites Were Right About Why You Hate Your Job (New York: Verso, 2021), 47–52.

- Richard Barbrook & Andy Cameron, “The Californian Ideology,” Mute, Sep 1, 1995, https://www.metamute.org/editorial/articles/californian-ideology.

- Trebor Scholz, Platform Cooperativism: Challenging the Corporate Sharing Economy (New York: Rosa Luxemberg Foundation, 2016), https://rosalux.nyc/wp-content/uploads/2020/11/RLS-NYC_platformcoop.pdf.

- Platform Cooperative Consortium,” How platform co-ops can benefit Technologists, accessed June 15, 2021, https://platform.coop/about/benefits/technologists/.

- Karl Marx, The German Ideology, (libcomm e-Book), 69.